RIP, Gene Amdahl. He was 92, and had been living with Alzheimer's for several years. He passed away 2015/11/10.

| CPU time = | (seconds )/ program | = | (Instructions )/ program | × | (Clock cycles )/ Instruction | × | (Seconds )/ Clock cycle |

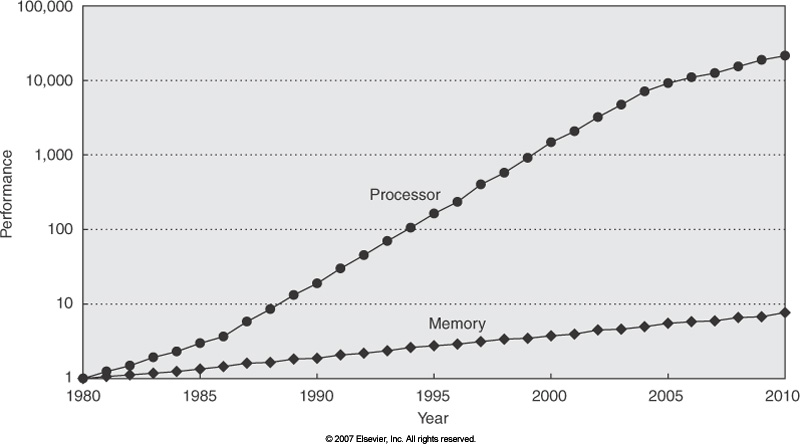

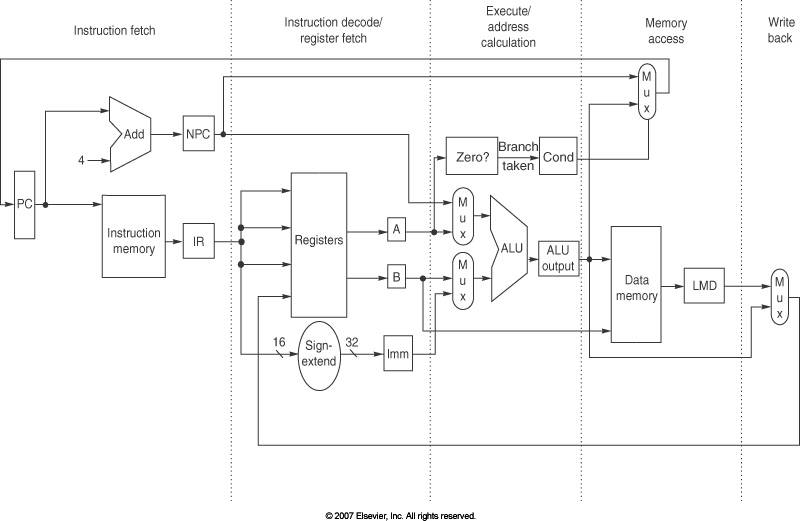

This is the point where I admit that last week I told a white lie: in the MEM stage of the pipeline, results came back from memory in one clock cycle. In reality, they don't. We need to discuss cache hits and cache misses, hit time and miss penalty.

With those values, we can determine the actual average CPI and expected execution time for a program.

We have now reached the point where it is imperative that you be able to extract and manipulate individual bitfields from larger words. I did some chalk board exercises last week, today it's your turn.

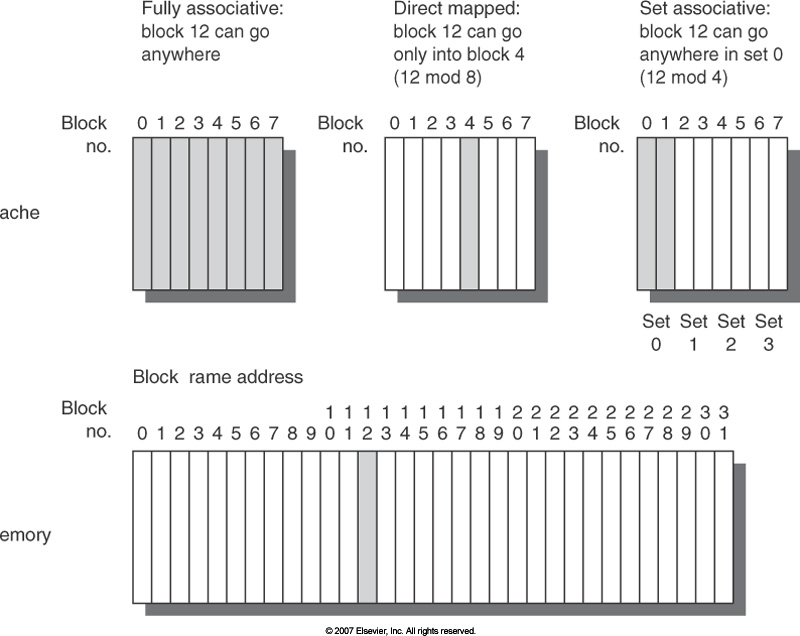

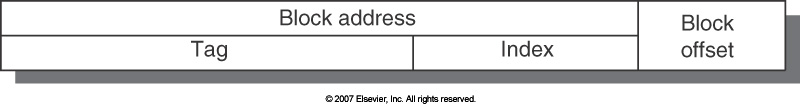

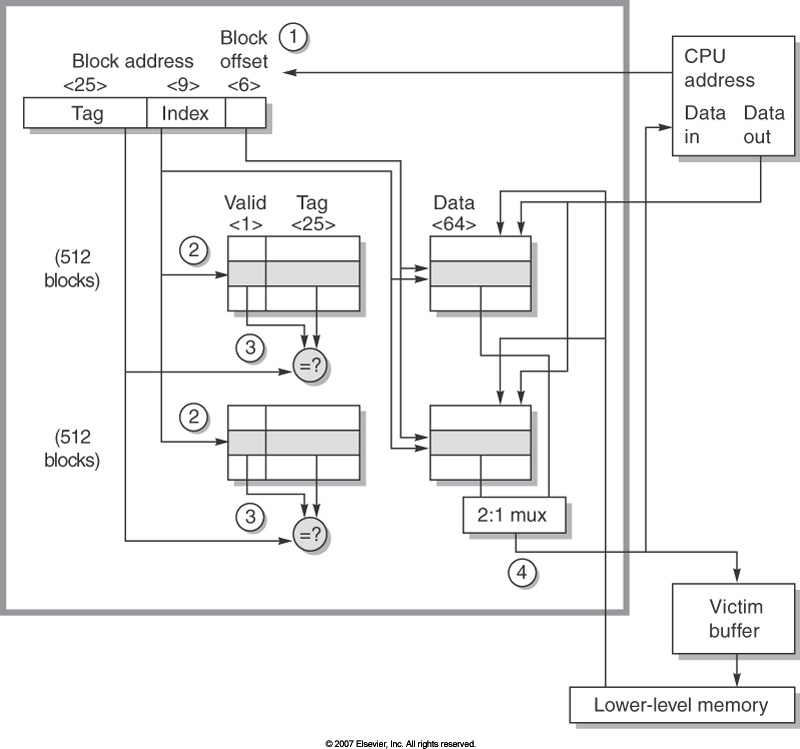

The AMD Opteron processor uses a 64KB cache, two-way set associative, 64 byte blocks. Addresses are 40 bit physical addresses.

| Avg. memory access time | = |

Hit time + Miss rate × Miss penalty |

Fortunately, many processors support out of order execution, which allows other instructions to complete while a load is waiting on memory, provided that there is no data hazard.

It's obvious from that high miss penalty that cache hit rates must exceed 90%, indeed, usually are above 95%. Even a small improvement in cache hit rate can make a large improvement in system performance.

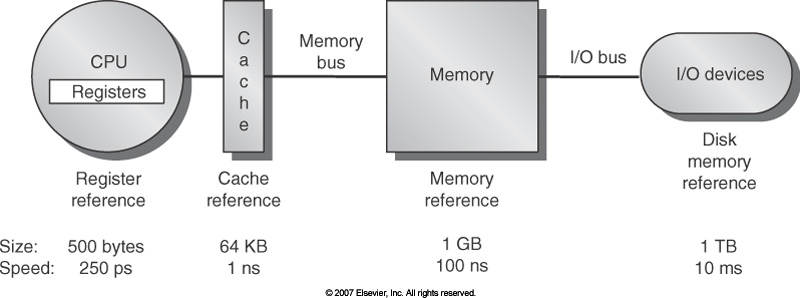

The principle reason is that there is a direct tradeoff between size and performance: it's always easier to look through a small number of things than a large number of things when you're looking for something. In computer chips, it's also not possible to fit all of the RAM we would like to have into the same chip with the CPU, in general-purpose systems; you likely have a couple of dozen memory chips in your laptop.

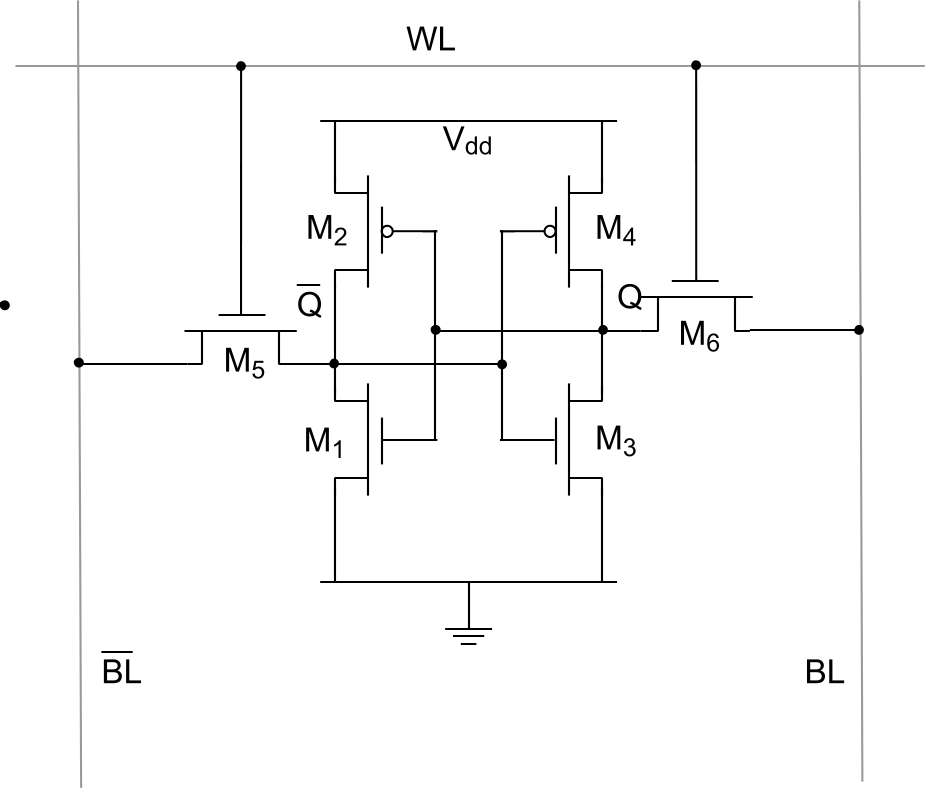

In standard computer technology, too, we have the distinction of DRAM versus SRAM. DRAM is slower, but much denser, and is therefore much cheaper. SRAM is faster and more expensive. SRAM is generally used for on-chip caches. The capacity of DRAM is roughly 4-8 times that of SRAM, while SRAM is 8-16 times faster and 8-16 times more expensive.

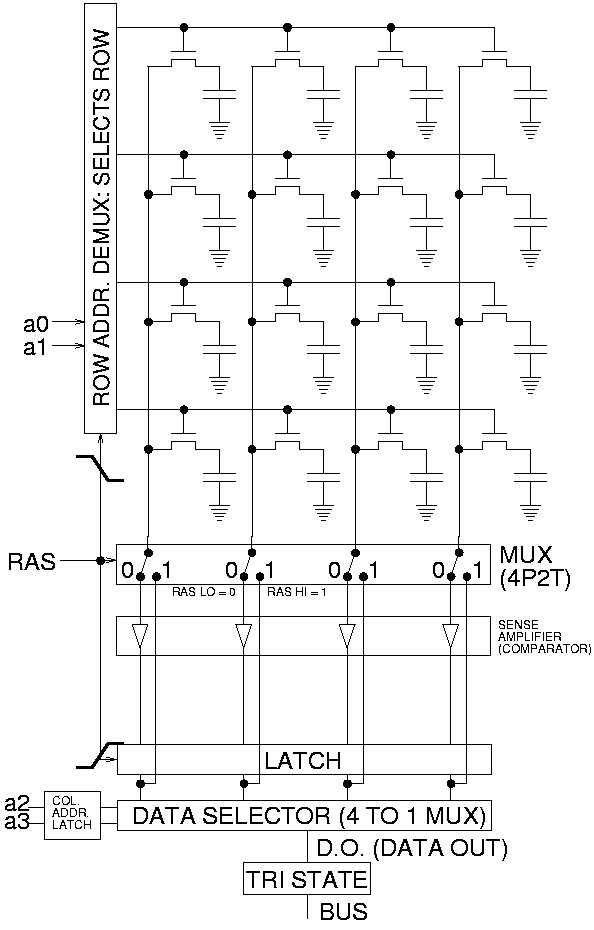

An SRAM cell typically requires six transistors, while a DRAM cell requires only one. DRAMs are often read in bursts that fill at least one cache block.

Whereas, in DRAM circuits, a single bit of memory requires only a single transistor: